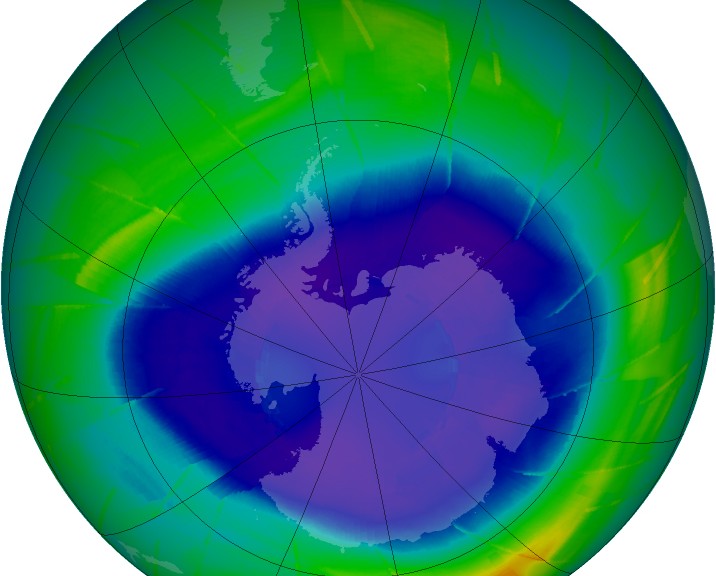

Featured photo courtesy of Nasa Goddard Space Flight Centre.

Introduction:

Earlier in the Sunlight Series I wrote about electromagnetic energy being radiated by the sun, and how it’s mostly infrared, visible light,and ultraviolet (UV) radiation.

From my last post, we know that the atmosphere absorbs electromagnetic radiation before it hits Earth’s surface. This radiation absorption differs depending on the wavelength of the entering electromagnetic radiation. I also wrote about how UV radiation is generally split into UV-A, UV-B, and UV-C, with UV-C being the highest in energy, and UV-A being the lowest. UV-C is entirely screened out by a combination of oxygen and ozone in the atmosphere. UV-B is almost entirely screened out, with about 97-99% being absorbed by the ozone layer. UV-A passes through with little absorption by the atmosphere.

UV-B is the type of electromagnetic radiation that causes our skin to produce the extremely important and often overlooked Vitamin D, but with excessive exposure, or a high sensitivity, also causes sunburns. Sunburns are linked to skin cancers. From this, it is intuitive that a certain degree of UV-B exposure is healthy, but too much can lead to skin damage. What is the right amount? That is a complicated subject that I will delve into in a future post. For now, I want to compare the amount of UV radiation hitting Earth’s surface today with pre-industrial levels.

The ozone layer, which absorbs UV-B and UV-C radiation, has been impacted by human activities. This process is known as the depletion of the ozone layer, or ozone depletion. Ozone depletion would, theoretically, lead to an increase in UV radiation hitting the Earth’s surface and is generally what began the practice of slathering yourself in sunscreen when exposed. This process is misunderstood, so I will discuss the actual scientific findings below.

However, there is a much lesser known competing effect to ozone depletion called global dimming. This is another anthropogenic effect we are having on the atmosphere (there’s quite a few…). It is essentially the effect of all particles we release in to the atmosphere via combustion which leads to an overall reduction in the amount of sunlight, and thus UV, hitting Earth’s surface.

Shocking, isn’t it? We are told to protect ourselves from the sun, and the general thinking is that the sunlight hitting the earth’s surface is stronger today than in centuries past, when in fact the opposite is true. Let’s look a little more in detail at both of the processes. This post will describe the ozone layer and how it is depleted, and will also describe global dimming and provide a comparison of the two effects, and how concerns about increased UV are largely overblown.

Depletion of the Ozone Layer: More UV

The ozone layer, which is about 20-40 km from Earth’s surface, has a relatively high concentration of ozone (O3). In the ozone layer, ozone concentration is still quite low but is generally more than 10 times higher than the ozone concentration at sea level. Thus, the dominant form of oxygen in the ozone layer is still diatomic oxygen (O2), but we call it the ozone layer since it has more O3 than anywhere else and O3 is what shields the surface of Earth from UV radiation.

Ozone concentrations vary naturally with seasons. In the northern hemisphere, the ozone layer is thickest during the spring, and thinnest during the fall. This is due to atmospheric currents (Brewer-Dobson circulation) that are quite complicated and beyond the scope of this post.

Human activities have decreased the amount of ozone in the ozone layer through the release of compounds aptly named ozone depleting substances (ODS). The most famous example of an ODS is chlorofluorocarbons, or CFCs, amongst others. CFCs were used as refrigerants in many applications including refrigerators, air conditioners, along with being a propellant in bug and hair sprays. They are gases that, upon being radiated by ionizing radiation (like UV), release unstable atoms that react with O3. In the case of a CFC, the unstable atom released is chlorine (Cl), and for other ODS the unstable atom is bromine (Br). This leads to a depletion of O3 in the atmosphere via a multi-step chemical reaction.

This mechanism was observed as early as 1974 and is known as the Rowland-Molina hypothesis which has been supported experimentally many times over. In 1985, a paper was released that documented a large ozone ‘hole’ over the Antarctic pole. This was the first observed instance of concentrated ozone depletion. Once it was known that humans were having an impact on ozone concentrations, international legislation in the form of the Montreal Protocol of 1987 was enacted to phase out CFCs and eventually the lesser ozone-depleting HCFCs. Scientists believe that if the Montreal Protocol is complied with by all that signed, the ozone layer should recover completely by 2050.

The extent of the ozone depletion at any one time or place is very complicated. The magnetic poles of the earth (Arctic and Antarctic) tend to see the worst ozone depletion during their respective springtime (via Brewer-Dobson circulation, as mentioned above), with levels reaching 33% of pre 1980 values (part of this is natural, but is enhanced by ODS). At latitudes other than the poles, however, the loss is much less concerning. According to Prof. Muirat the University of Oregon, there is essentially no ozone loss at latitudes between 25°N and 25°S, which is tropic-to-tropic. Latitudes from 35°-60° (N or S) generally see a loss of about 3-6%. Above 60° (N or S), the losses become more significant. There is one exception to this however. After spring the ozone hole over the southern pole breaks up and ozone depleted air circulates to higher latitudes which causes a sharp, but temporary, drop in overhead ozone concentrations in New Zealand (and Australia to a degree) .

Compliance with the Montreal protocol has been successful and CFCs are now phased out with the less damaging but still concerning HCFCs being phased out by 2030. As a result, although ozone levels have been compromised at very northern or very southern latitudes, the ozone levels are recovering. Anywhere else, including everything between the tropics, has not seen a significant change in ozone levels. Based on this, where most people are living, ozone depletion has not increased surface UV radiation noticeably when compared to pre-industrial levels (a few percent at most).

As mentioned in the introduction, however, there is a competing effect to ozone depletion called global dimming, which more than makes up for a bit less ozone.

Global Dimming: Less UV

Global dimming is a lesser-known human-caused phenomenon that is affecting the way the sun’s radiation is interacting with our planet. Aerosols, mostly in the form of soot, are tiny particles that have been increasing in concentration as humanity has aggressively pursued combustion energy over the last few centuries and especially in the last several decades. Since the 1950s, measurement stations all over the world have recorded a substantial decrease in the amount of radiation hitting Earth’s surface by as much as 10 to 37 percent (from The Sunlight Solution, by Laurie Winn Carlson).

Aerosols not only block sunlight directly by absorbing the rays, but they allow for the formation of water droplets in the upper atmosphere increasing cloudiness. Clouds block out sunlight, as anyone who has been outside on a cloudy day can attest. On the whole, the process of global dimming has actually decreased the amount of radiation hitting the surface of the earth despite ozone holes and greenhouse gases. This process does not occur equally across all wavelengths of radiation, but will affect all of them to some degree. Truth be told, there is a rare phenomenon called ‘cloud UV enhancement’, where if a day is partly cloudy and the clouds are arranged just so, that surface UV can be enhanced. This is rare however, occurring in about 1.4-8% of measured partly cloudy days. Generally speaking, aerosols and clouds reduce surface UV. Some pundits have actually remarked that global dimming is saving us from both increased UV due to ozone depletion and the enhanced greenhouse effect.

Although the process of global dimming appears to be a saving grace, reduced radiation hitting the surface is having a dramatic impact on agricultural production and evaporation rates worldwide. Also, even on a sunny day, there is usually less wonderful UV-B radiation to create Vitamin D in your body. Just another way we humans are improving things around here.

In conclusion, the idea that surface UV radiation is radically different today than in the past is a misguided notion. With only a few exceptions, it hasn’t really changed that much, and still varies dramatically day-to-day, as it always has.

If humans in the past survived (even thrived in) the sun’s radiation, then humans today can as well. It is not as simple as SUN = CANCER. Not even close. We spend close to 90% of our time indoors in modern times, which is a historical anomaly. Now that we know that the sun’s radiation hitting the surface hasn’t changed all that much, why do we fear it?

Next post: How Vitamin D is produced in your skin, and why it’s so important!

As always, please leave a comment or question!

It is a well-known fact by some that aircraft emissions are one of the main causes of global dimming.

Re Ozone layer depletion: What will happen if the use of fossil fuels was banned/ forbidden/ halted permanently.

I hate to think about it. A deep blue sky instead of a pastel-blue one.

Thanks for the comment William! I’ve thought about that as well…we might be in a fine balance with fossil fuel usage due to global dimming (global dimming vs ozone depletion, and also global warming might be slowed by global dimming). It’s hard to know for sure.

Really interesting that aircrafts could be the main source of global dimming. They definitely have the altitude.

Thankfully, all signs point to the ozone layer returning in strength. Thanks again for commenting!